CircleID: ICDAR 2026 Competition on Writer and Pen Identification from Hand-Drawn Circles

Challenge update (5 March 2026)

We released an updated version of the CircleID dataset to fix an issue in the previous evaluation data. Only the evaluation split was affected. The training data and file format are unchanged, so no retraining is required.

- The evaluation files were replaced and

test.csvwas updated accordingly. New evaluation filenames use av2_prefix. - Please re-download the competition data, then run inference on the new

test.csvand resubmit. - The leaderboard has been rescored to keep comparisons fair, so score shifts are expected.

- The previous test set is now available as optional extra training data in

additional_train.csv. - The new test set contains previously unseen writers who appear in neither the training data nor the additional training data.

- Writer identities for unknown writers in

additional_train.csvremain labeled aswriter_id = -1by design.

Description

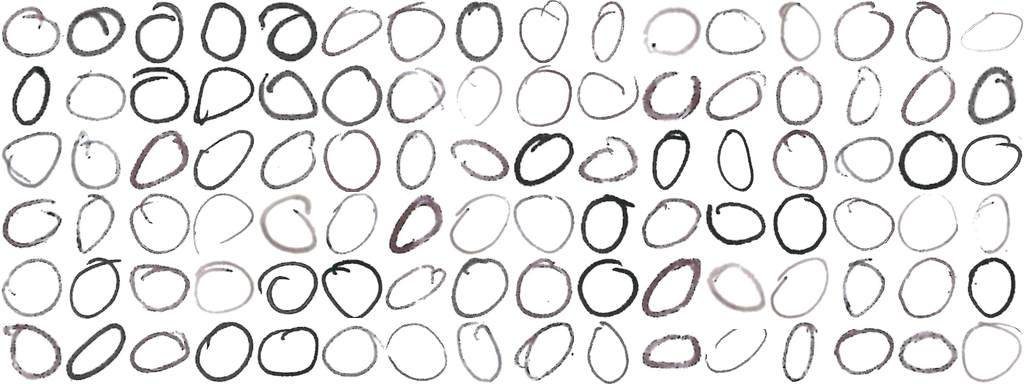

CircleID is the ICDAR 2026 competition on identifying who drew a circle and which pen was used, using only scanned images of hand-drawn circles. Although a circle is a simple shape, it contains rich, subtle cues from both human motor behavior and pen/ink characteristics. The challenge is to learn representations that disentangle writer style from pen properties in static images.

Participants receive a new dataset of 46155 scanned, circle images collected under controlled conditions from more than 51 writers and 8 pens. Two tasks are evaluated: (1) writer identification (with an "unknown writer" class) and (2) pen classification.

Tasks

CircleID features two related classification tasks on static images of hand-drawn circles. The goal is to learn visual representations that separate writer-specific traits from pen-specific traits (ink deposition, stroke texture, width).

Task 1: Writer Identification

-

Objective: Predict the writer ID for circles drawn by known writers. For circles drawn by writers not present in training, output

-1(unknown writer). -

Out-of-distribution: The test set includes circles from unseen writers (not present in training).

For these samples, participants must output

-1as the predicted writer ID.

Task 2: Pen Classification

- Objective: Predict which of the predefined pen types was used to draw each circle. This task tests robustness across both known and unseen writers.

- Applies to all test images: A pen prediction is required for every sample, including those from unseen writers.

Key challenges include feature entanglement (writer habits vs. pen properties) and high intra-writer variability despite strong inter-writer similarity.

Dataset

The CircleID dataset contains 46155 tightly-cropped images of hand-drawn circles collected in a controlled study with 51 known writers and a pool of unseen writers using 8 different pens. Templates were digitized with high-resolution scans (400 dpi), and each circle was automatically extracted and manually linked to ground truth labels.

- Images: PNG files, one circle per image.

- Labels: Writer ID and pen ID (only provided for training set).

Data split

The released data are organized into labeled training data, optional additional training data, and a held-out evaluation set to assess both writer identification and generalization for pen classification.

-

Training data:

train.csvcontains the original labeled training split. -

Additional training data:

additional_train.csvcontains the previous test set and may be used as optional extra training data. -

Unknown writers in additional data: Writer identities for unknown writers are not disclosed and

therefore remain labeled as

writer_id = -1by design. -

Evaluation set:

test.csvcontains 5905 images for evaluation. It includes both writers represented in the training data and previously unseen writers who appear in neithertrain.csvnoradditional_train.csv.

The dataset can be accessed through the Kaggle competitions pages (Writer Identification & Pen Classification). Ground truth for the test set remains confidential during the competition.

Training data

- Images: Directory of

.pngfiles. -

Manifest:

train.csvwith columns:image_id,image_path,writer_id,pen_id.

Additional training data

- Images: Directory of

.pngfiles. -

Manifest:

additional_train.csvwith columns:image_id,image_path,writer_id,pen_id. -

Note: For unknown writers in this file,

writer_idremains-1by design.

Test data

- Images: Directory of

.pngfiles. -

Manifest:

test.csvwith columns:image_id,image_path. - Ground truth: Not provided for the test set.

Submission Format

Your submission

CircleID is hosted as two separate Kaggle competitions with independent leaderboards and submission files: one for writer identification and one for pen classification.

-

Writer Identification competition: Submit

submission_writer.csvwith columnsimage_id,writer_id. For unseen writers, setwriter_idto-1. -

Pen Classification competition: Submit

submission_pen.csvwith columnsimage_id,pen_id. A pen prediction is required for all test images.

- Writer Identifiction: ICDAR 2026 - CircleID: Writer Identification

- Pen Classification: ICDAR 2026 - CircleID: Pen Classification

Evaluation

CircleID includes two independent leaderboards, one per task. Rankings are based on top-1 accuracy.

Task 1 Leaderboard: Writer Identification

- Metric: Top-1 accuracy.

-

Evaluation data: The full evaluation set. For circles drawn by writers represented in the labeled training identities, predict the correct writer ID. For circles drawn by unseen writers, predict

-1.

Task 2 Leaderboard: Pen Classification

- Primary metric: Top-1 accuracy.

- Supplementary metric: Macro-averaged F1-score (reported for analysis).

- Evaluation data: The full evaluation set to measure robustness across both seen and unseen writers.

Leaderboard protocol: During the competition, the leaderboard is computed using only 30% of the test set annotations (public leaderboard). The remaining 70% of the test set annotations are held back (private leaderboard) and are used to update the final rankings at the end of the challenge.

Winners will be announced for each leaderboard at the conclusion of the competition.

Baseline

We provide a simple but effective baseline to help participants get started and to establish an initial benchmark.

The baseline is a ResNet model pre-trained on ImageNet and fine-tuned on CircleID.

A single training script supports both tasks via a TASK switch.

- Backbone: ResNet feature extractor pre-trained on ImageNet.

- Head: One task-specific fully connected classification layer.

- Two modes: Set

TASK="writer"for writer ID prediction, orTASK="pen"for pen ID prediction. - Code: Training and inference code are located in the Kaggle Challenges (Writer Identification & Pen Classification) and on GitHub.

Timeline

- Feb 3, 2026: Registration and submission to Kaggle open; Training data with ground truth released; Hold-out test set without ground truth released

- March 5, 2026: Hold-out set without ground truth released.

- April 3, 2026: Official submission deadline.

- April 17, 2026: Submission of the initial competition report.

- May 4, 2026: Camera-ready report submission.

- June 22, 2026: Winners communicated to the chairs.

Note: Submissions remain open after the official end of the competition to support ongoing benchmarking.

Contact

For questions about the CircleID competition (dataset access, rules, submissions, evaluation), please contact the organizers:

- E-Mail: thomas.gorges@fau.de

- Kaggle discussion (Writer Identification): Discussion Forum

- Kaggle discussion (Pen Classification): Discussion Forum